Linear SVM

The loss function for a logistic regression model is given by

The output of the logistic regression is defined as

Ideally, the weights should be as low as possible. To do so, we employ L2 regulrization. This also has a side effect of weight decay where the weights for non-contributing features tends to zero.

where ![]() is a regularization parameter.

is a regularization parameter.

The functions log(y) and log(1 - y) can be replaced by approximated cost functions.

Now, divide L by ![]() .

.

Let

. Then

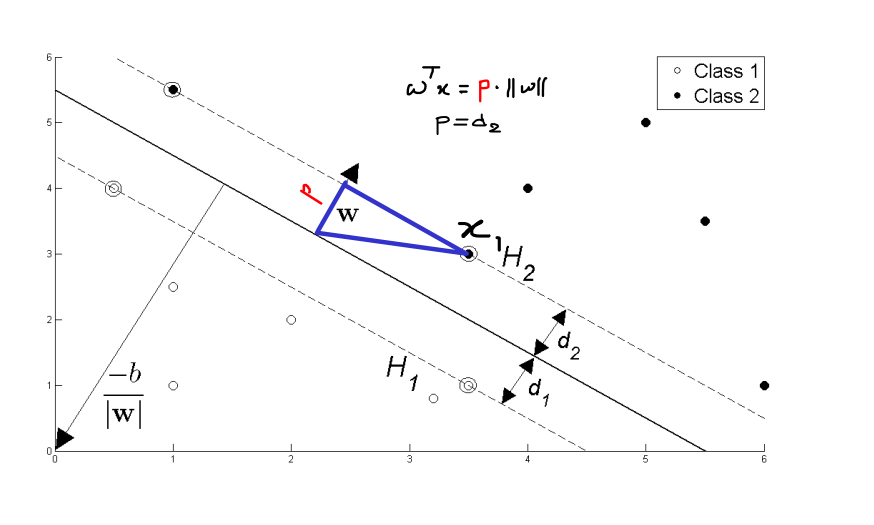

This is equivalent to maximizing the margin which is given by ![]() between hyperplane and the data points in both classes.

between hyperplane and the data points in both classes.

Here is how – Ideally,

But this can be written as (from projection theorem)

The aim of SVM is to maximize the distance between i.e maximize d2. Hence it is equivalent to minimizing ||w||.

Kernel Trick

Instead of just ![]() , one can use

, one can use ![]() . Generally,

. Generally, ![]() will be gaussian function and hence the input closer to

will be gaussian function and hence the input closer to Support Vectors gets activated and you try to minimize the cost function involving the kernels. The gaussian kernels are also called as Radial Basis Functions(RBF) because of the shape of the activation.

Extra Notes

The vector c is also called as lagrage multiplier. It is used to find the support vectors that maximizes the margin.

For complete explanation, refer to this link.